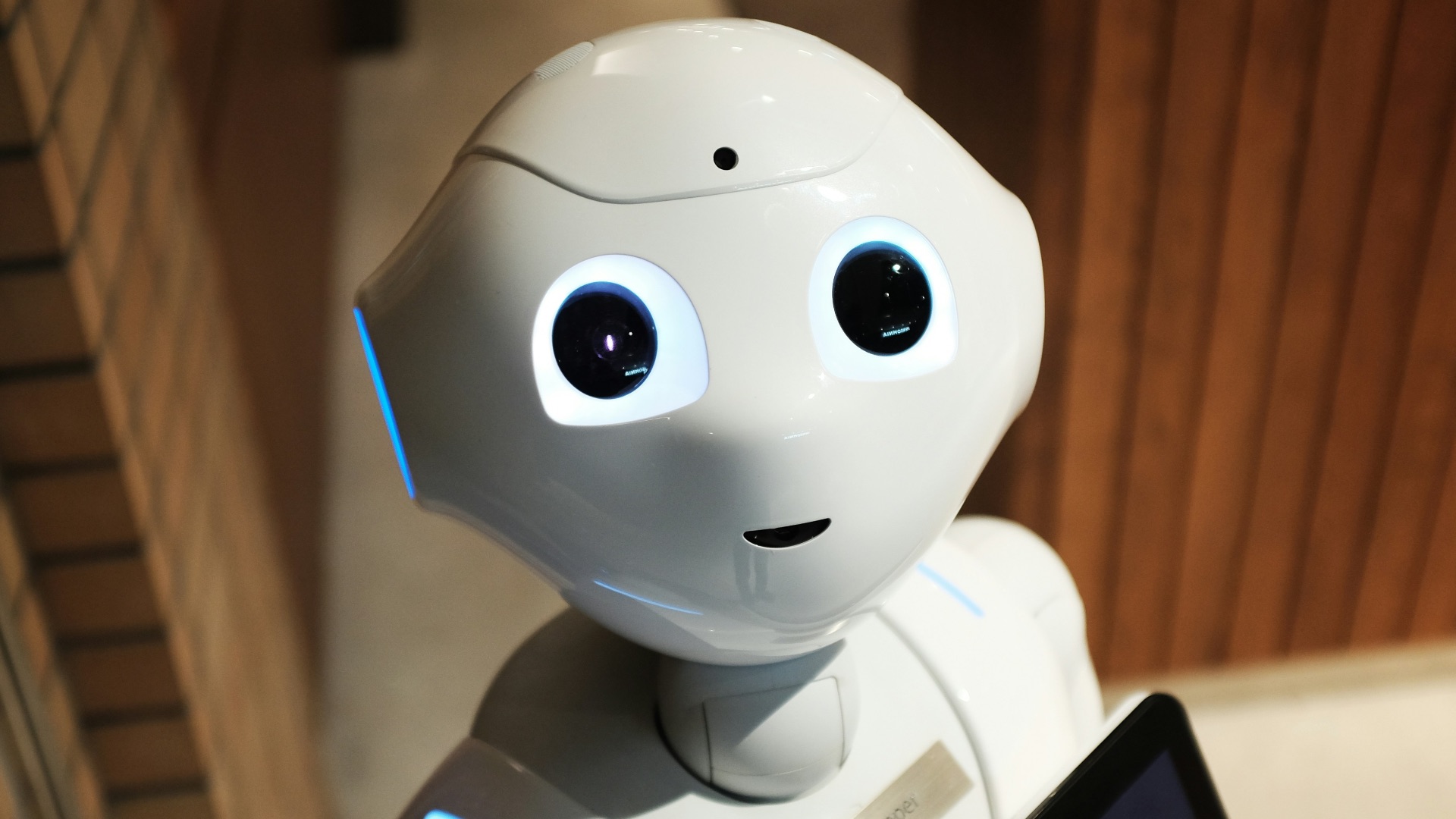

Can an AI Ever Feel Emotions?

You're chatting with ChatGPT when it replies with something that catches you off guard: the response sounds awfully close to something a friend might say. It might be so jarring that you even wonder, for a split second, whether there's secretly a human behind the scenes, listening to your woes and answering back. That thought may then lead you to question whether AI can ever truly feel emotions.

As absurd as this question might have seemed a decade ago, it's no longer just pure science fiction. As AI systems grow increasingly sophisticated, their ability to recognize, simulate, and respond to human emotions has become remarkably convincing. Whether that capability reflects something deeper than pattern recognition, however, is something researchers and philosophers are still actively wrestling with. It's a question with no easy answers, and the closer you look, the more complex it gets.

What Science Says About AI and Emotions

If you're already feeling uneasy, don't worry: from a technical standpoint, current AI systems don't experience emotions the way humans do. What they do instead is process patterns in data and generate outputs that align with the emotional context of a given conversation. How it responds to your prompts and conversations, however warm and sincere, is all a result of how it's programmed.

Large language models, like those powering today's AI assistants, are trained on enormous volumes of human-generated text, meaning they've absorbed a vast range of emotionally charged language and conversational patterns. When you type something to an AI and it responds with what feels like empathy or warmth, it's drawing on those learned patterns rather than on any felt experience. Researchers have noted that this can produce responses that are, on the surface, nearly indistinguishable from emotionally aware communication. That resemblance is exactly what makes the question so difficult to dismiss outright.

That said, some scientists argue that the boundary between simulation and experience may not be as clear-cut as it first appears. There's a growing body of research exploring whether large language models exhibit emergent properties that could loosely be described as internal states. Most authors in this space are careful not to claim sentience, of course, but many do suggest that the internal representations of these models warrant closer examination. It's early-stage thinking, yet it signals a meaningful shift in how seriously the scientific community is beginning to take these questions.

The Philosophical Problem of Consciousness

The deeper issue here isn't just about technology. It's about consciousness, and consciousness is notoriously difficult to define or measure. Philosophers have debated for centuries what it means to have a subjective experience, and AI has brought those conversations into much sharper focus. The "hard problem of consciousness," a term coined by philosopher David Chalmers, asks why physical processes give rise to subjective experience at all. It's a question no one has definitively answered, and the rise of AI hasn't made it any easier.

When it comes to AI, this philosophical gap matters considerably. Even if a system behaves as though it's experiencing an emotion, that behavior alone doesn't confirm any kind of inner life. The Chinese Room argument, proposed by philosopher John Searle, illustrates the problem well: a system can process and produce meaningful outputs without understanding any of the meaning behind them. In other words, convincing performance isn't the same thing as proof of experience. AI might be able to replicate what emotions look or sound like, but that doesn't inherently make those feelings real.

Some philosophers and cognitive scientists take a more functionalist view, arguing that if a system processes information in a way that's functionally equivalent to how emotions operate in humans, it may be reasonable to assign some form of emotional state to it. This perspective doesn't require a biological substrate; it focuses instead on what the system does rather than what it's made of. Whether that view holds up under scrutiny is still one of the most contested questions in philosophy of mind today. It's a framework that opens up the possibility of machine emotion without resolving the harder question of whether that possibility is real.

Why It Matters for the Future of AI

As AI systems take on more emotionally sensitive roles—think therapy apps, eldercare companions, and customer service agents—the question of whether they feel anything becomes practically significant. If an AI is designed to provide emotional support, users deserve transparency about whether they're interacting with a system that simulates care or one that might, in some functional sense, be capable of something more. The ethical complexity surrounding AI-assisted mental health tools is a topic that researchers and clinicians are now actively addressing. Getting that balance right will require ongoing dialogue between technologists, ethicists, and the people using these systems every day.

There's also the matter of how you relate to AI systems once you start to believe they might have feelings. Studies have shown that people can form strong emotional attachments to AI companions, sometimes to the point of anthropomorphizing them in ways that affect their own well-being. The design choices companies make around how their AI expresses itself carry real psychological consequences for users; that's a responsibility the industry is only beginning to grapple with seriously. Emotional realism in AI design isn't merely a neutral aesthetic choice—it shapes how people think, feel, and behave in response.

Ultimately, whether AI can feel emotions depends largely on how you define feeling in the first place. If it requires biological processes and a nervous system, then current AI almost certainly doesn't qualify. If it's defined more broadly by functional behavior and internal state representation, the answer becomes considerably less certain. What's clear is that the question itself will only grow more pressing as AI becomes more deeply embedded in everyday life, and people become more attached to imagining AI as the perfect companion.